What is SASE?

This article is part of our series on Zero Trust. For more information on Zero Trust, check out Zero Trust, Explained.

In this article, we'll unpack the mystery around SASE - what it is, what's exciting about it, and, importantly, the challenges it can't solve.

In this article

- What is SASE?

- What's the potential of SASE?

- What are the key drivers of SASE?

- Is SASE Zero Trust?

- What are the limitations of SASE?

- Are there other approaches?

What is SASE?

Secure Access Service Edge, or SASE, is one of the hottest trends among cybersecurity vendors today. As Gartner pointed out in a 2019 whitepaper (paywall), the current generation of network security (namely, firewalls, web proxies, and IDS/IPS) are poorly suited to handle the IT challenges of the modern business.

There's nothing inherently wrong with the security capabilities of those network security boxes; rather, the main issue with today's network security is that they are boxes, deploying at a fixed point inside a network built and controlled by corporate IT. As applications continue to move out of corporate datacenters and into cloud and users continue working from anywhere, forcing traffic to route through those fixed points for security and compliance is an increasingly untenable proposition.

SASE envisions a secure access layer, deployed from the cloud, to more easily deliver security functions to distributed users and sites. Some of the security features in this layer include ZTNA, CASB, and SWG.

To solve the problem of how to route packets through this secure access layer, many solutions rely on SD-WAN, a technology originally intended to provide a more flexible alternative to MPLS for branch connectivity, to provide a security service insertion point. In this way, traffic from a branch office, datacenter, or cloud can be directed to the SASE cloud for security inspection before it heads off to its final destination.

Right here, we can see some of the limitations on the horizon - SASE may work well for cross-domain traffic, but can it address on-prem traffic? And what if you can't or don't want to route through the SASE cloud? We'll address these points later.

What's the potential of SASE?

For enterprises, SASE claims to offer many benefits, including standardization of security operations across multiple sites and clouds. Additionally, it offers the promise of security-as-a-service, which can help rapidly adapt to changing security requirements simply by updating policies.

What are the key drivers of SASE?

Key drivers for SASE interest include:

- The rise of Work from Anywhere

- Growing adoption of cloud computing among enterprises

- Steadily increasing cloud migration activity that pushes applications and data outside of the traditional corporate network perimeter

The effect of these trends is to de-emphasize centralized corporate facilities in favor of models that enable a distributed, service-oriented enterprise.

SASE can be an attractive alternative to building

Is SASE Zero Trust?

No. SASE alone does not implement a NIST 800-207 Zero Trust Architecture.

SASE's ZTNA features provide secure remote access:

SWG is an implementation of the traditional corporate web proxy in the cloud: it protects users as they browse the Internet. In other words, the user's laptop is what is protected. SWG does not protect a resource against threats already in the network.

CASB is a specialized remote access method for cloud infrastructure. In addition to basic ZTNA, it adds other cloud-specific security features - for example, to secure a storage bucket.

A true Zero Trust solution must provide access to a protected resource while enforcing the implicit trust zone - which SASE does not do.

For more information on Zero Trust, read our explainer article:

What are the limitations of SASE?

SASE's vision is to enable delivery of security services to distributed users and sites from the cloud. To deliver, SASE has several infrastructure and operations (I&O) hurdles to overcome.

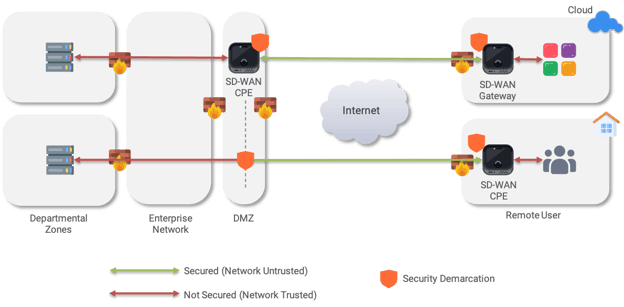

- "Service edge" demarcation is still at the network edge

Using SD-WAN as an on-ramp to SASE firmly establishes existing the network edge as the service edge. In order to get to the service edge, traffic originating deep inside the enterprise in a different network zone or in a peered VPC needs to first get through existing networking and security edges before reaching the SASE on-ramp. This relies on traditional routing/switching and firewall techniques, creating friction in the process that negates some of the agility benefits. This also mingles identity with existing network infrastructure, which can create practical challenges for the adoption of Zero Trust models.

With the service edge at the network edge, security policy definitions can only be enforced from the network edge, rather than from the application edge. Additionally, such an implementation is only effective for north-south traffic; services like FWaaS can't be used like microsegmentation - they don't apply to on-prem east-west traffic. This creates yet another infrastructure corner case to deal with.

Additionally, SD-WAN is not an ideal deployment model for all scenarios. Consider a remote user, who needs to work from home. Delivering SASE through a SD-WAN CPE only shifts the problem into the user's home network, and the low portability makes it clearly infeasible solution for allowing the same user to work from a coffee shop. SASE coupled with networking requires effort to route through trusted zones to the demarcation point

SASE coupled with networking requires effort to route through trusted zones to the demarcation point - Security enforcement is still coupled with networking topology

Edge-to-edge security policies are sensitive to the configuration of the underlying networks, and require effort to maintain as the applications delivered over-the-top change. For example, bringing up a new cloud application and providing least-privilege access to a sensitive on-premises database may require the on-prem security settings to be modified later if there is a cloud VPC change for any reason.

This means that projects using SASE will need the full support of and coordination between the security, networking, and application I&O silo owners.

It's possible that some of these current limitations will be addressed in the future through orchestration. Already, efforts are underway to create SASE standards. Once complete, implemented and tested for interoperability, they may prove useful, however, there is a substantial existing installed base of enterprise networks which may not be able to benefit, or will never change. The world will always have brownfields.

Are there other approaches?

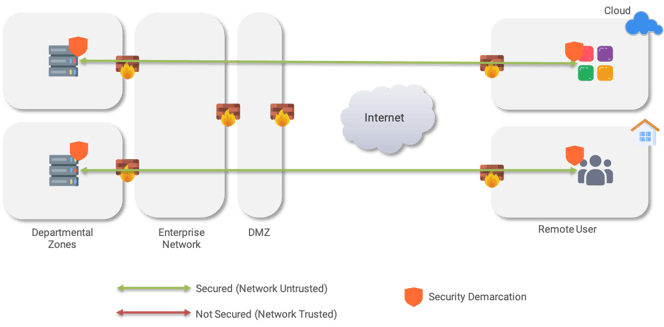

Yes. Security functions can also be delivered through a network overlay.

An overlay-based SASE takes an endpoint- and application-centric approach, and delivers the following improvements over SASE:

- Pushes the security service edge to the application edge, inside or adjacent to the endpoint

- Consistent security across all environments, reducing dependencies on existing network silos for improved agility

- Decouples security from networking with Zero Trust-based identity for users, applications, endpoints, and devices

- Enables security policies that do not change when they underlying network environment changes

- Enables security policies that apply to one application only, decoupled from other applications using shared network infrastructure

- Enables microsegmentation on east-west, as well as north-south directions

These properties allow security functions to be deployed on top of any brownfield environment, in any datacenter environment, or in any public or private cloud. The security demarcation is at the endpoint, allowing Zero Trust policies to be enforced from end-to-end in any environment.

SASE overlay model with demarcation at the endpoint distrusts all underlying networks

By decoupling from the network, an overlay allows the enterprise to upgrade and change applications independently and elastically. Even underlay networks can be upgraded, transparent to applications it serves – for example, to implement SD-WAN as a software-defined underlay – to improve network performance without changing security policies.

Furthermore, an overlay, which is built on computing elements only, rather than a combination of computing and networking, can be implemented by the security and DevOps teams. The networking team can retain responsibility for the underlay network availability and performance, without having to be involved in application provisioning. The overlay model provides another significant agility boost by allowing smooth and independent business and infrastructure operations.

Other technologies have undergone a similar progression that decouples the network from the applications and security that use it, and it is our opinion that overlays are inevitable for enterprise operations.

Written by Mike Ichiriu

Mike Ichiriu is VP of Marketing and Product at Zentera Systems, where he leads product strategy for the company, including its Zero Trust and agentic AI security initiatives.

A Certified Cloud Security Professional (CCSP) and frequent speaker on enterprise security, Mike has 25+ years of experience across cybersecurity, networking silicon, and enterprise software, and holds 15 U.S. patents.